Par Stéphanie De la Asuncion, Directrice Projet BI, Mickaël Mackow, Chef Projet BI et Vivien Liochon, Expert technique MSBI – Micropole Centre-Est.

Début 2018, Microsoft sortait un service d’intégration de données entièrement hébergé dans le Cloud : Azure Data Factory. Permettant d’orchestrer les services qui collectent les données brutes, cette solution les transforme aussi en informations prêtes à l’emploi. À travers cet article, nous allons faire un état des lieux de ce service en décrivant ses intérêts, ses limites et son utilisation dans un cadre concret : qui reprendra les étapes de création d’un flux d’alimentation sous Azure Data Factory.

Depuis plusieurs mois voire des années, Microsoft s’inscrit dans une démarche de Cloud Computing pour proposer de plus en plus de service Azure et pousser les entreprises à suivre le pas. Le bénéfice : avoir une seule plateforme de gestion globale regroupant tous les services essentiels au bon fonctionnement du système d’information de l’entreprise.

Azure Data Factory est un service qui va permettre aux entreprises de ne plus avoir à gérer des machines physiques ou virtuelles pour effectuer le traitement de leurs données. Le principal intérêt de cette nouvelle fonctionnalité est d’éviter les coûts d’entretien, de maintenance, les installations logicielles, etc. On conserve tout de même la possibilité de traiter, transformer, stocker, supprimer et archiver des données notamment avec SSIS et les espaces de stockage Azure.

AZURE DATA FACTORY : L’APPLIQUER EN ENTREPRISE

- Contexte du projet chez l’un de nos clients

Nos experts ont mis en place un outil BI avec des flux d’alimentation nocturne, réalisés avec les technologies Microsoft hébergées sur des machines virtuelles Azure. Une fois cet outil en place, les demandes d’évolutions ont mené à de nouveau flux quotidiens devant être exécutés en journée.

Afin de limiter les coûts d’utilisation des serveurs Azure, les jobs ont donc été planifiés pour arrêter les serveurs en fin de traitement. Les nouveaux flux générés nous ont par ailleurs posé une problématique : la gestion du démarrage et d’arrêt des serveurs.

Cette contrainte nous a mené à explorer la piste Azure Data Factory. En tant que service, il n’y a en effet pas de gestion du serveur à prévoir et les nouveaux flux de données simples ont donc été développés avec par ce biais.

Les objectifs étaient de :

• Valider l’utilisation de cet outil en mode service ;

• Sur le long terme, se séparer de l’infrastructure des machines virtuelles ;

• Stocker les données provenant des diverses sources dans un espace consolidé Azure ;

• Lancer les flux SSIS de traitement des données sans avoir à gérer un serveur ;

• Ordonnancer l’intégralité des flux d’alimentation.

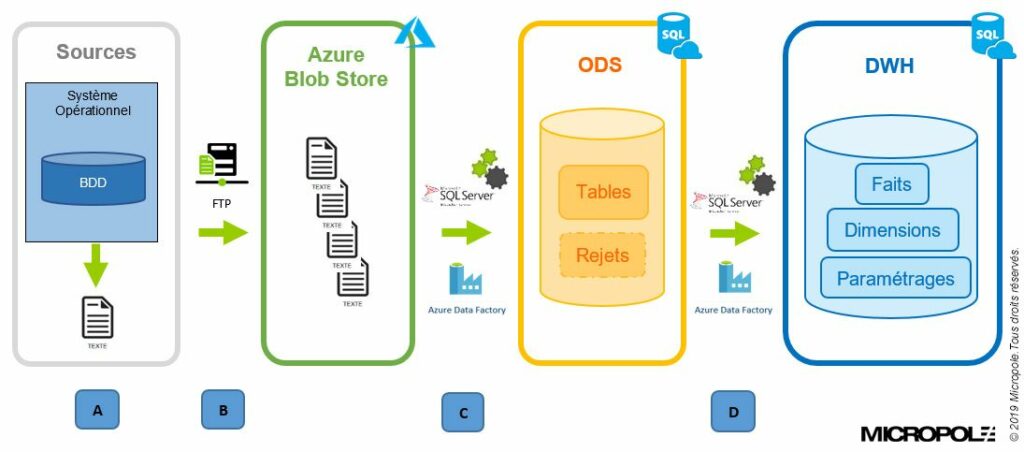

Pour présenter l’architecture du projet, voici le schéma qui inclut l’environnement Azure dans la mise en place de l’intégration globale des données.

A. Extraction des données dans des fichiers

B. Transfert FTP des fichiers vers l’espace de stockage Azure (blob store)

C. Intégration des fichiers dans l’ODS (Operational Data Store) via des packages SSIS lancés depuis Azure Data Factory

D. Intégration des données de l’ODS dans le DWH (Datawarehouse) via des packages SSIS lancés depuis Azure Data Factory

- Fonctionnement d’Azure Data Factory

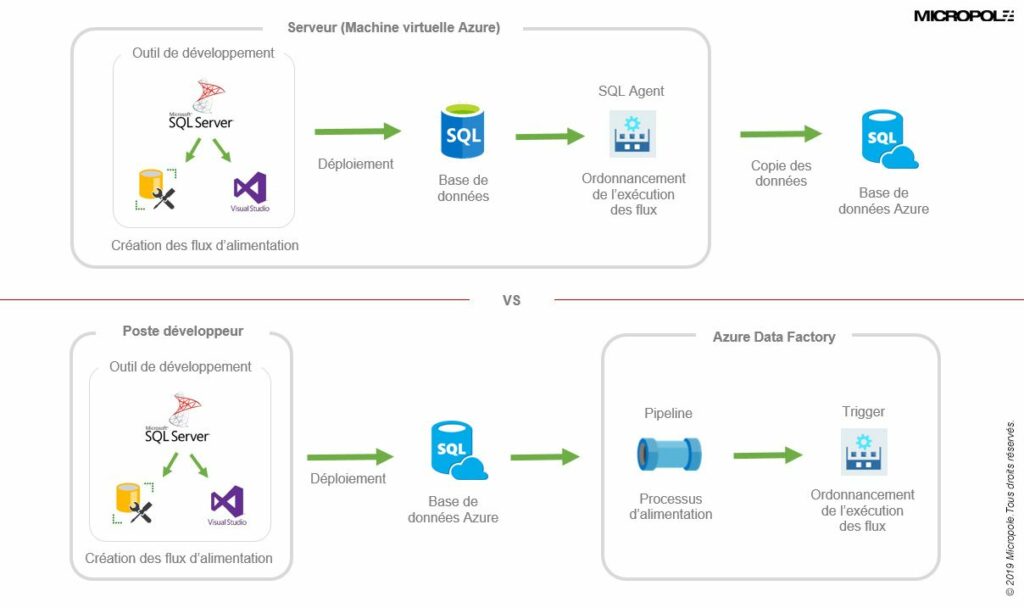

Schéma comparatif entre le fonctionnement d’un flux via un serveur et un flux ADF

Il est à noter que le seul coût pour Azure Data Factory est le SSIS Integration Runtime. C’est un nœud qui permet de lancer des flux d’alimentation SSIS sur lequel on vient attacher une licence SQL Server. Il est aussi possible de configurer les performances en fonction de l’utilisation et des données.

BILAN ET PERSPECTIVES

En accompagnant notre client sur son projet BI, nos experts ont pu mettre en exergue les tops et les flops de cette récente solution tels que :

Les avantages :

• Un service délocalisé ;

• Une prise en main facile pour des flux simples ;

• Des coûts limités ;

• Un développement ou une gestion de licence qui ne nécessite pas d’installation d’outil ;

• Un ordonnanceur : possibilité de planifier les flux de manière périodique ou un évènement.

Les inconvénients :

• Une gestion fastidieuse des environnements (production/recette/développement/…) ;

• Nécessite une attention particulière au démarrage du service : il faut penser à un arrêt automatique sinon les coûts augmentent très vite ;

• Toutes les fonctionnalités ne sont pas encore disponibles et il est donc difficile de faire des flux complexes.

Cependant, Azure Data Factory est une solution qui permet facilement de créer, gérer et suivre les processus d’alimentation dans un seul et même service.

Nul doute que dans les mois à venir (des nouvelles moutures de l’outil en V2), le service va évoluer pour intégrer des composants natifs qui ne nécessiteront pas de développement spécifique : la suppression de fichiers dans un espace de stockage Azure, le démarrage/arrêt du SSIS Intégration Runtime et bien d’autres … À suivre.